AI Cost Volatility: A New Risk for Security Leaders

For years, the narrative among influencers, futurists, and AI leaders has been that we should prepare for the cost of intelligence to plunge to zero. This narrative is already breaking. The example below is just one of the many from the previous couple of years.

Although this narrative certainly made for catchy lines and applause breaks at conferences, reality paints a different picture. The reality is that AI is becoming one of the most volatile and expensive dependenciesin modern systems, and most organizations aren’t prepared for what comes next.

The $20 A Month Myth

Many users and enterprises were sold on the idea of a $20-a-month AI subscription. For $20, you get an all-you-can-eat buffet of AI. But this was always the juicy worm concealing the hook. Not unlike the disappearance of cheap all-you-can-eat buffets in Vegas.

The reality is, tokens used for generative AI are subsidized. For startups like OpenAI and Anthropic, the tokens are subsidized by venture capital. For hyperscalers like Google, Microsoft, and Amazon, tokens are subsidized by profitable business units. Which, also coincidentally, allows them to dress up any profits as wins for AI, despite the potential of not being related, but that’s a different topic. Regardless, these are all investments, and investors and shareholders want returns.

We are already witnessing these changes. The past few weeks have started to shed light on this new reality. I covered some of these economic issues in my take on Mythos. One of the most shocking is that the Uber CTO reported that they have already blown through their entire 3.4 billion AI budget in the first few months of 2026 due to Claude Code.

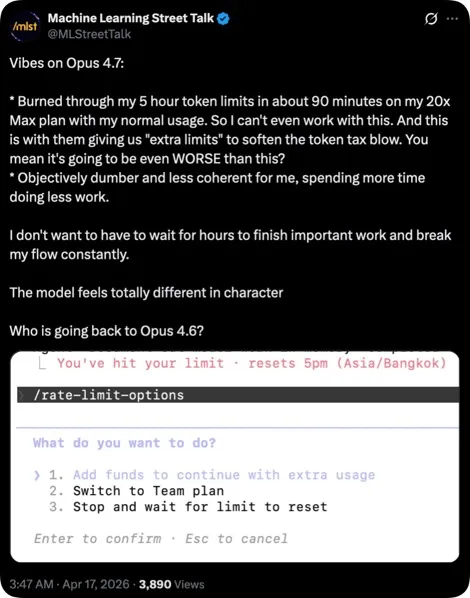

The usage limits and price hikes aren’t going unnoticed. The Machine Learning Street Talk team’s take on Opus 4.7 and the usage limits is not positive. Keep in mind that this is the $200-a-month plan.

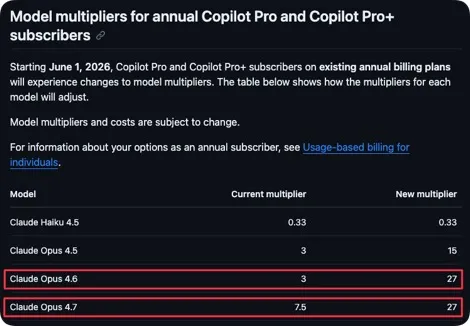

More recently, Microsoft raised eyebrows among users as GitHub Copilot subscriptions skyrocketed, with the multiplier for Claude models rising to 27x. In one case from a previous 3x.

The next generation of LLMs may net some performance gains, but will also be much more expensive. For example, the Claude Mythos Preview model is priced 5x over Opus 4.7, which is the current best Claude model.

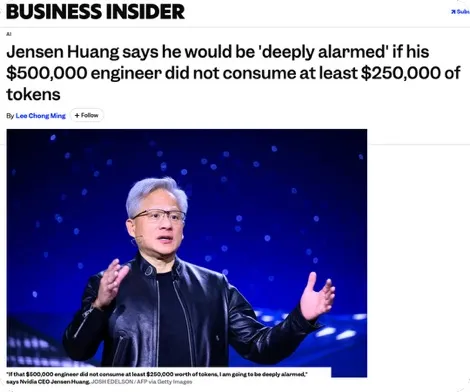

Everywhere you turn, prices are increasing. Seems intelligence isn’t free after all. It’s in this environment that Nvidia’s CEO says he’d be alarmed if employees use less than half their salary in tokens.

Of course, all this increased token usage benefits Nvidia, but they are far from the only ones pushing this narrative.

The moral of the story is that what you pay is far less than what it costs. It doesn’t take a business degree to know that this is unsustainable. The hope was always built on speculation. Speculation that generative AI will skyrocket with the arrival of AGI (or near-AGI), and companies will be able to replace employees. To date, announcements by companies about layoffs due to AI have mostly been AI washing.

It’s a good possibility that if the companies charged what it cost them, many would not have even bothered to try the technology in the first place.

So, what does all of this mean for security leaders?

Security Leader Impacts

Generative AI pilots and products have some false assumptions built into them. One of them is that the cost of these today will be the same as (or lower than) tomorrow. This just isn’t the case. The reality is that costs can skyrocket quickly, even with more controlled price increases. It’s possible to double or triple costs rather quickly. This calculus needs to be a consideration and built into budgets.

In this environment, there’s another realization. AI can be more expensive than human employees, as Fortune covers. So maybe not just half a salary, but over a salary, and of subsidized tokens at that.

A study from MIT found that AI was cheaper than humans only 23% of the time. Granted, they were only studying computer vision use cases. However, computer vision use cases are interesting because you’d expect that the AI would be far more capable for less money at this point.

But the real problems surface with deployment and reliance on AI tools and products in production use cases. Applications and products could become half-functional or non-functional when usage limits are hit or budgets are expended. This creates a new operational risk. When a critical system depends on tokens, it forces a decision: pay more or stop functioning. Think of this as a token ransom. It’s scary to consider how the ransomware of the future may actually be an inflated token cost.

Unfortunately, with deployments deep in production environments, many organizations are also increasing technical debt. Meaning it won’t be easy to pivot to another solution or roll back to the way things were before. Downgrading models or rolling back processes may reduce capabilities and leave wide gaps in a solution. You need a plan.

Security Leader Recommendations

The first thing an organization needs is an AI strategy. The number of companies experimenting with AI without this strategy is remarkable. Not having an AI strategy increases the likelihood of wasting time and money. The AI strategy should outline the goals and business objectives, establish initial governance, and cover build-versus-buy decisions. Although a full breakdown of what should be included in a formal AI strategy is outside the scope of this post, some of the observations and recommendations below are worth considering.

Expect Price Increases

For projects using cutting-edge foundation models from model providers, you should budget at least 2x to 3x your expected cost. If we look at AI’s use in development, we see that actual costs for enterprises range from $200 to $500 per developer per month, with power users hitting $500 to $2,000 per month. These rates are far above the $20-a-month charges companies may expect.

Although use cases will vary, budgeting more is becoming a necessity. There are trade-offs involved, so, as with any investment, you’ll want to be sure you are actually getting ROI. Part of this is identifying problems first and having solid benchmarking and success criteria.

Identify Problems First

The excitement of AI experimentation often overrides the sense that a problem is necessary. Building a solution first and then identifying a problem afterward is a poor path to success. This is the Jeff Goldblum approach. In Jurassic Park, Goldblum’s character said, “Your scientists were so preoccupied with whether they could that they didn't stop to think if they should."

In a business sense, the “if you should” should be related to an actual problem with all of its consideration of benefits and tradeoffs. What is the goal? What are you trying to accomplish? Does this align with your AI strategy? These and many other questions should be asked before investing in developing a solution.

Establish Benchmarking and Success Criteria

Without benchmarking and success criteria, you may end up blinding yourself with an experiment that technically works but isn’t better than alternative approaches, or even your legacy processes. You also won’t be able to answer the glaring question of whether it’s better to have a human do something.

This needs to be measured beyond outputs alone. Measuring productivity purely by output is a poor proxy for true productivity. To take development as an example, people measure AI productivity in lines of code. But lines of code just tell you that you have more of something. It doesn’t tell you about usage, effectiveness, maintainability, security posture, or any of the other valuable factors you’d care about in practice.

Ensure that what you are measuring is a true indicator of success and not just a variable that can be maximized. This requires taking the entire process into account and considering downstream impacts. Simply asking, “Can we do this with AI?” is not a substitute for a real problem and can lead to significant issues and technical debt.

Lastly, this criterion needs to be specified before the experimentation starts. Otherwise, how will you know if you are making progress or not? Maybe you are just specifying measures that support your approach vs the reality of the situation.

Reduce Unnecessary Usage

Many AI experiments are nothing but throwing AI at use cases and seeing what happens, or more specifically, seeing if it simply works. There is little consideration for how things should actually be done. Many companies have a backlog of outstanding problems. With the arrival of AI, they are hoping to address them. However, many of these problems could have been addressed previously with even simpler forms of automation. It’s just that there was no incentive to do so.

Explore solving problems with more deterministic methods, either in whole or in part. These approaches will be faster, more reliable, more resilient to attack, and monumentally cheaper. Ask, how else could this be done? Seeking the alternative is a potentially illuminating experience. It can also provide input for benchmarking by giving you another approach to benchmark against.

Most of all, don’t encourage excessive use or your team to “just use AI for everything.” Some companies are using leaderboards to encourage more use, gamifying the use of AI tools and approaches. This is a bad idea. Not only is this excessively costly, but increased usage does not necessarily translate into greater productivity, as we’ve already mentioned.

Explore Small Models

Of the security leaders I talk to, almost none are discussing or considering using small models. Granted, these leaders are struggling with many AI-related problems, but this will need to change. The cost and capability of small models almost ensure that they’ll be a critical part of the future.

Fine-tuning small models for purpose-specific tasks can increase their effectiveness, reducing reliance on cutting-edge foundation models for all tasks. Using small models in an architecture can significantly reduce costs. But the benefits don’t end with cost. This approach puts you in control, so you won’t be affected by changes in capabilities and other constraints in providers’ models and services.

Granted, there are trade-offs with this approach as well, and deploying small models takes a bit of work. You’ll need to identify scenarios where small models can be deployed effectively, and depending on how you approach it, you may need to manage the training and infrastructure yourself. But when done correctly, the rewards speak for themselves.

Ensure You Can Pivot

The key to success in our current era is agility. When building or deploying solutions, ensure your architecture allows for pivoting. Swapping out or testing different models should be an essential part of your architecture. If there is a need to pivot away from one model provider or specific model, this should be easy to do.

Keep in mind, easy to do doesn’t mean easy in practice. You’ll need to ensure the pivot doesn’t negatively impact your product or approach, and that this testing is done in advance to avoid affecting a production application or service.

Ask Your Vendors Tough Questions

Everyone is pushing AI to the max at the moment, and this includes the products that you use or are considering purchasing. Vendors face the same struggles as everyone else in the industry, and they aren’t going to absorb the increased cost of AI.

Ask your vendors about their plans for the increased costs. How are these increases going to affect their products and services, and what does this mean for you? Ask about their contingencies and how they expect to maintain their services.

For vendors that sell you tools but you use your own API key for billing, keep in mind that their inefficiencies and the need to use cutting-edge models negatively affect your bill. To a certain extent, your bill isn’t their concern. Address this issue with them. Having your vendors focus on efficiency, not just capability, is vital. If they don’t provide satisfactory answers, do not deploy their products in critical areas that can’t be easily removed.

Conclusion

The narrative of free intelligence was always more marketing than math. What we are witnessing now is part of a correction, but it’s far from the end of the road. As subsidies shrink, budgets collide with economic reality. This condition will require vigilance and planning by security leaders to manage both expectations and budgets.