A Reasoned Take On Mythos For Security Leaders

By now, everyone has heard of Anthropic’s Mythos and Project Glasswing, and as a result, everywhere you turn, it seems chaos is ahead. We are told that either we are on the precipice of a vulnerability apocalypse or we are witnessing the end of cybersecurity. Much of this perspective is nothing more than the breathless speculation and hype we are accustomed to in the current era.

However, we shouldn’t let this lull us into a false sense of security, thinking that AI is nothing but hype. There are real advancements happening in the space, and things are changing rapidly. If there’s one lesson we should learn in the current AI era, it’s to take a breath after these announcements have been made and gain some clarity.

But there’s some good news: we already know how to address many of the concerns raised by the Mythos announcement today. We have an opportunity to double down on what works by focusing on strong fundamentals. AI doesn’t make these fundamentals obsolete. It makes them more important.

Taking A Breath

Making strategic decisions under fear is never a good approach. It typically leaves you just as lost as when you started, only lighter in your wallet and further from your goals.

A valuable skill for the current AI era is to take a breath whenever one of these announcements is made. The ability to hold more than one thought in our heads at the same time is essential. We need to realize that something can be overhyped and also useful. This is the landscape we find ourselves in with generative AI as a whole.

These companies are trying to hype their products to raise their valuation. This doesn’t mean the claims are false or that impacts aren’t real, but it does mean they have incentives to overhype or sensationalize claims. For example, much of the “it’s too powerful to release” aspect is a publicity stunt played before with GPT-2, a move OpenAI seems poised to repeat.

The Mythos announcement created two predominant impressions in cybersecurity:

- Mythos represents the end of cybersecurity

- Mythos will bring a vulnerability apocalypse

This is a lot of speculation about a tool none of us has been able to evaluate, which in turn leads to speculation about what it means more broadly. I wrote about this last week on LinkedIn. The number of cybersecurity people genuinely depressed over the Mythos announcement is mind-boggling. Everywhere I turn, speculation is rampant, with countless people claiming this is the end of cybersecurity.

The other major fear is that attackers, or even script kiddies, will use these tools to industrialize 0-day exploits at scale and wreak havoc. Increasing not just the number of vulnerabilities, but also the number of highly impactful vulnerabilities at scale. The impression that we’ll have something like a Heartbleed event every week.

Perspectives like these fundamentally miss the forest for the trees.

To state it simply, Mythos and other more capable models won’t usher in the end of cybersecurity or a vulnerability apocalypse. Or at least not in the foreseeable future. The realities are much more mundane. These things are tools.

Some Perspective

Much of the concern seems to be around things that are already here. Attackers and defenders can identify these issues today using skill, tools, or even currently available AI resources. People seem to forget things like Google’s Big Sleep. So, the future problems people worry about are largely already here, with some caveats, of course.

Despite the speculation, there are reasons to doubt the proposed inevitabilities of Mythos. Reading the published details, there are things that should give us pause, as well as some obvious hype. I won’t spend time dissecting the entire report, since it’s not overly relevant to what security leaders should be doing as a result, but there are a few interesting points to consider.

A Misconception

To start with, the report highlights a misconception. In one area, they state: “Because these codebases are so frequently audited, almost all trivial bugs have been found and patched.” The perception that open-source projects have many audits because the code is available has always been a misconception. If this were true, we wouldn’t need organizations like OSTIF to fund audits. Certainly, it should be acknowledged that not all open-source projects are audited equally, and while the OSS-fuzz corpus may receive more attention, the misconception holds.

The 0-Days

Also, the bugs they chose to highlight, given the over a thousand critical bugs they claim to have, are puzzling. If you are sitting on a mountain of critical bugs, why highlight issues that can’t be exploited for remote code execution (RCE), which would have been far more impactful? Seems like something they would have mentioned even if they can’t disclose the technical details due to responsible disclosure.

For example, the 27-year-old BSD bug often touted as the pinnacle of the announcement can’t be used for RCE. At best, it can be used to crash a system. Anthropic mentioned they spent nearly $20,000 on this, as well as on what we can only consider lesser bugs. To be fair, you don’t know what you are going to find until you perform the testing.

To continue, the FFmpeg bug ($10,000) is not critical, and the Guest-To-Host memory corruption bug (???) only causes crashes with Mythos unable to produce a functional exploit. That’s the summary of the three 0-days they highlighted. Later, they do describe an RCE in FreeBSD running NFS, but it’s in a different section.

Despite claiming to have thousands of high- and critical-severity bugs, they also state they cannot confirm this with certainty. This is because they engaged contractors to manually review 198 of these reports. There isn’t much information about the 198 cases they chose to review, other than that the contractors agreed with Mythos on severity level 89% of the time. Although this may hold true, these are assumptions, and it would only apply to this specific class of vulnerabilities they chose to highlight. Would it be the same for other vulnerability classes? Maybe. We’ll have to wait and see.

Inexperience

They also claim that: “Engineers at Anthropic with no formal security training have asked Mythos Preview to find remote code execution vulnerabilities overnight, and woken up the following morning to a complete, working exploit.” This sounds scary until you think of follow-up questions. RCEs in what? What were the conditions? How many people? Was the existing scaffolding created by security experts? Etc.

FP Rates and Benchmarking

They also didn’t mention anything about false positive rates. This could be because they chose to go after memory safety issues, which were easy to verify, but this won’t hold equally true for other vulnerability categories. False positive rates are important because they indicate how much work is required to triage and interpret the output.

They also didn’t benchmark their vulnerability discovery approach against currently available tools. I admit, in a certain sense, this is nitpicky because results are results, and how many things could we really expect them to do as part of this research? However, when someone spends $10,000 in tokens to identify a SQL Injection vulnerability in code, even though they could have run a tool like Semgrep for essentially zero cost, it seems like an important distinction. It would also help to understand where some of these traditional tools excel and where Mythos excels, so one can identify where one can fill the other’s gap.

Insightful Observations

None of the criticism is meant to dismiss the impressive nature of some of the model’s capabilities and their approach, but simply to call into question some of the surrounding hype. There are other things in the report that are genuinely insightful.

For example, giving attackers the ability to create working exploits for known vulnerabilities is incredibly impactful because it doesn’t matter where the vulnerability originated. The results are the same. Working exploits that can be launched against unpatched systems. To note, this condition exists today outside of Mythos, minus the potential increase in speed.

Another interesting observation is that Mythos was able to chain together complex sequences of vulnerabilities and maintain a persistence that humans may not. In one case, the assumption was that since protections were in place, they thought a vulnerability was non-exploitable, only to find that it was after actually trying to exploit it. This is insightful.

This persistence could lead to the identification of vulnerabilities that a human may assume are non-exploitable and call into question protections that make exploitation tedious, rather than impossible, as they claim. This is a solid takeaway for the AI era. However, we can’t ignore the economic aspect in these scenarios, which will be covered in the next section.

The most interesting aspect is their scaffolding. This is an approach you can use today to find vulnerabilities, without needing access to Mythos, using available models.

Economics Matter

Economics becomes an incredibly important factor when discussing AI. I mentioned the 20k and 10k discovery costs, which apply to the first two examples, but then the mention of costs disappears. There is no clear indication of the economics of these activities. Whether you’re spending money on humans or tokens, cost matters, and it doesn’t matter whether you are an attacker or a defender. The question becomes:

How much money is anyone (attacker/defender) willing to spend to find a vulnerability that may or may not be in a system?

It’s a question we’ve dealt with for decades in security, but it becomes front and center with highly capable models. You don’t know whether highly impactful bugs exist in a system at the start.

In essence, the activity becomes something like a vulnerability slot machine for both the attacker and the defender. Only for defenders, a loss (not finding bugs) can still be a win, depending on the application’s risk profile and the amount of money spent.

These economic issues are becoming front and center with generative AI. The Information recently reported that Uber has already blown through its entire 3.4B budget for 2026 due to AI costs.

This should be a cautionary tale for security leaders, whether adopting AI-heavy tools or AI-heavy approaches.

The costs today will not be the costs tomorrow.

The reality is that token costs with large models from AI labs are subsidized by venture capital today, which means you are getting tokens for less than they are worth. This isn’t sustainable, and investors will want a return on their investment, which means price increases. This is something Marcus Hutchins pointed out as well in the days following the announcement. Imagine costs doubling or even tripling. Would it still be worth it? For some, this increase is happening already.

In some cases, rather than price increases, providers will choose to lower usage limits, which is the same thing phrased differently. This is something that Anthropic has already been doing. Anthropic appears to be nerfing models and reducing usage rates, something their customers are not happy about, as can be seen in this Axios article.

With the launch of Opus 4.7, people have noticed, and they aren’t happy.

AI token costs directly impact security use cases. Which makes things like vulnerability discovery, AI SOC, and other use cases a matter of value for the cost. As I mentioned previously, this is not a new problem. It has been a problem since the beginning of cybersecurity, but it isn’t talked about much when it comes to AI. That will certainly change.

Beyond the cost factor, this puts so much of the dependency on 3rd party products that you have little control over. It’s not just the cost fluctuations that affect you. It’s any reduction in capabilities, deprecation of models, or a host of other factors. This effect applies to the shiny new AI-powered products you purchase as well. With many products and approaches, pushing at full blast with the most capable models will make cost an even bigger issue.

My friend Anant Shrivastava calls this scenario “rented cognition.” I like this phrasing because it highlights a condition that costs you money and that you also don’t own. Both costs and capabilities are beyond your control, meaning you are at the mercy of any price increases as well as any limitations or changes in capabilities. Not the best scenario to build your entire foundation on.

In short, as a security leader, you have to consider the future economics of these approaches today, as tools and products that rely heavily on generative AI are purchased.

We shouldn’t forget that these same constraints affect attackers as well. A determined attacker doesn’t have an unlimited budget, well, with a catch. Security leaders need to factor into their calculus that some adversaries are actually well-funded. Think of nation states, APT groups, and ransomware operators, for example.

Tools are Tools

The fear that script kiddies will use AI to accelerate their activities is not new. At Black Hat USA in 2023, amid these same concerns, I said that when Metasploit was originally launched, people said it was like giving nukes to script kiddies. Metasploit is a tool in a toolkit, much like AI-powered tools are today. The reality is that a certain level of competency is required to use these tools effectively.

The counterargument is that a model like Mythos will drop the skill floor significantly, allowing anyone to conjure vulnerabilities like a magic spell. It’s not impossible that the skill floor becomes reduced, but it isn’t likely to happen in a meaningful way in the near future. Skill will still be required. Besides, if this is the case, the same tools available to these inexperienced attackers will be available to defenders.

We already have tools today, both AI-powered and non-AI-powered, that allow people to find vulnerabilities in software and systems. Despite the availability of these tools, detectable vulnerabilities persist. This demonstrates a disconnect in incentives for identifying and remediating vulnerabilities.

Many organizations aren’t spending enough on cybersecurity, and the message hidden in the fear is that if you throw more money at the problem, buying more tools, now AI-powered tools, that these issues will subside, but that isn’t the case. The cybersecurity space is packed to the brim with tools. Sure, less than perfect tools, but tools nonetheless, and buying more tools won’t save you.

Every organization is different, but in many cases, tools aren’t the problem. The problem lies in organization, expertise, and process.

Vulnerabilities Will Persist

More code is being written now than at any point in time in history. People are using AI to produce more code than ever, and much of that code isn’t being thoroughly evaluated for vulnerabilities. To think we are on the verge of solving this cybersecurity issue is to be disconnected from reality.

Vulnerabilities will persist today despite tools and humans to identify them. This will remain consistent in a post-Mythos world. We’ll find that these models excel at some things, but not in others. We’ll find that some people are unwilling to incur the cost of identifying these bugs, and that organizations don’t consider all applications equally valuable. The same as today.

Security leaders need to think strategically about how to ensure they catch these vulnerabilities before they reach production. In addition, you need to strengthen your application security practices to account for the rapid patching that may be necessary due to the shortened time between identification and exploitation.

When The Code Doesn’t Exist

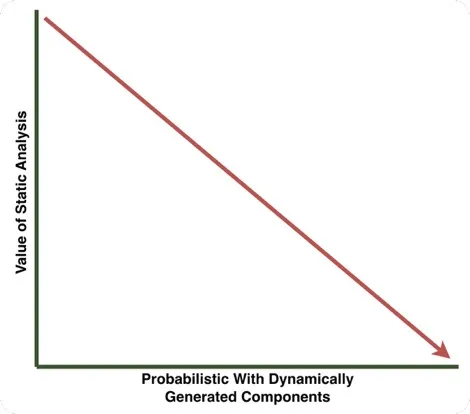

Much of the public emphasis surrounding Mythos has been focused on its usage for static analysis and reverse engineering, but there are other challenges with this being the end of cybersecurity. During our Black Hat USA talk in 2025, we pointed out a growing trend in which code doesn’t exist until the application runs. This involves systems and agents that perform code generation and execution tasks. You can’t perform static analysis on code that doesn’t exist. As this trend increases, the value of static analysis decreases.

The dynamically generated nature of these applications means that every application you build may be immediately untrustworthy, and performing static analysis to identify vulnerabilities doesn’t give you a decent picture of the risk. This requires an approach that places heavy emphasis on architectural controls, making architecture reviews highly important in this environment.

Attackers Don’t Need 0-Days

Most adversaries compromising systems today aren’t utilizing 0-day attacks. They are using the same playbook they’ve always used. They are performing social engineering attacks such as phishing, exploiting misconfigurations, exploiting passwords, and using many other, more mundane but highly effective techniques today. Attackers will continue to use what works.

In the near future, we may certainly have more identified vulnerabilities, but the perception that all of these will be actively exploited is not going to happen. An increase, yes, but an apocalypse, no. This is something Jeremiah Grossman also calls out in this article The AI Vulnerability Surge That Doesn’t Change a Thing.

As I alluded to earlier, being an adversary is a business. They also have cost and effort constraints. They use tools and techniques that are the best tools for the job. Why spend countless sums on identifying a 0-day in the hopes that it works for the specific organization you want to exploit, when a misconfigured system or a social engineering attack will net the same result?

Advice For Security Leaders

The good news is that we already know how to defend ourselves from the impacts of something like Mythos today, and you may already be doing much of what’s necessary to defend your organizations. The advice is largely the same, familiar advice dolled out over the past decade, except now more important with more emphasis on speed.

We don’t need to hope that new products are invented. Using models like Mythos for cybersecurity tasks doesn’t create new vulnerabilities or new vulnerability classes. It’s being used to identify and exploit existing conditions in systems and code.

What more capable models like Mythos mean for defenders is compressed timelines and a need for speed in remediation. This is arguably something many organizations are not good at, but may no longer have the luxury of avoiding.

What more capable models like Mythos mean for defenders is compressed timelines and a need for speed in remediation.

The irony here is that, despite the fear, enhanced model capabilities benefit defenders, but only if they are realized and utilized properly. The key is identifying security activities where defenders can most effectively apply AI.

Take A Breath

The trend is to treat AI as though it’s magic instead of a technology. Far too much is made of reading clickbait headlines instead of the details. Quite often, wading through the details of a report brings insight. And if clear claims aren’t being made with proof, then don’t invent them. Don’t perform the marketing for companies on their behalf.

Use What You Have

Panic-buying more technology is not a recipe for success, especially when the AI label is attached. This can actually make your condition worse, leading to issues such as tool sprawl or obligating you to use semi-functional tools that are still under development and may change, increase in price, or disappear at any moment.

Review your current security operations and processes. Purchase new technology only when there is a specific identified gap, and be purposeful in selecting tools. Ensure you don’t already have that capability in your stack.

Automate What You Can

Attackers are using automation, and you should too. You certainly can’t automate everything, but identifying quick wins can help your team pivot to focus on other activities. If there is tedious work, backlogs, or other drudgery, look for ways to move that work off defenders’ plates so they can focus on more of what matters.

Vulnerability Management and Patching

Mythos and other highly capable models promise not only an increase in vulnerabilities but a vastly shorter time between identification and exploitation.

Defense is typically slow. The identification of a vulnerability, the prioritization of its severity, the communication of that vulnerability to responsible groups, and then their backlog and processes for patching it takes time, too much time in many cases.

We need to prepare for even faster adversaries, which can significantly reduce the time defenders have to go from identification to remediation. This also needs to be based on risk, because not all vulnerabilities should receive the same priority, and spending effort patching a mountain of low-severity vulnerabilities takes time away from vulnerabilities that actually matter.

Review your program and tooling, apply Risk-Based Vulnerability Management (RBVM), use automation where applicable, and remove as many roadblocks as possible to shorten the time between the identification of impactful vulnerabilities and their remediation. Organizations have gotten away with lagging in this area, which is a luxury the future doesn’t afford.

AppSec and Product Security

If your organization develops software, then having strong application and product security foundations is key. This is especially true if you utilize AI to increase coding output. You need to ensure you can proactively identify software vulnerabilities throughout the development lifecycle.

This approach requires a combination of both process and tooling. The focus should be on identifying issues early. Educate developers on the risks and implement processes for performing threat modeling and architecture reviews. These activities should be combined with tooling that performs static and dynamic analysis.

Software is often built on prior foundations, and threats to software products can come from components such as 3rd-party libraries. Often, these are open-source libraries. A process for inventorying software components and building a software bill of materials (SBOM) should be implemented with assurances that it’s accurate and up to date.

SBOMs alone won’t ensure greater security. SBOMs should be paired with processes and tooling for vulnerability monitoring to ensure 3rd-party vulnerabilities are identified promptly and addressed quickly.

Detection and Response

The ability to detect is key. There may be conditions in which something is exploited, and no known patch is available. This means the ability to detect when an attacker has breached a system and may be pivoting, exfiltrating data, or taking a range of other actions. The benefit here is that this is valuable even in conditions where an attacker didn’t exploit a 0-day.

Ensure your incident response processes are up to date and your incident responders understand their responsibilities. Don’t wait until you have an incident to find out that things don’t work.

Token Minimizing

There is an odd trend called “tokenmaxxing,” where people use as many AI tokens as possible to signal productivity and status. This is the exact opposite of what organizations should be doing.

Organizations should work to reduce token usage, especially with the most capable models. Throwing caution to the wind and using as many tokens as possible is a good way to increase costs and dependence.

Experiment with approaches and architectures that reduce the dependence on the most cutting-edge large models. In many cases, evaluate if automation can be done with more traditional, deterministic approaches. Regardless of the marketing, cutting-edge AI isn’t the best tool for the job in all cases. Trust me, your budget and system reliability will thank you.

Expect Surprises

Ultimately, we need to prepare for surprises. There is a lot of uncertainty in the world. Trends will come and go, but the assets we need to protect are the same. Create solid foundations that account for unknowns and implement agility where possible.

Conclusion

The story being told is rather sensational, but when you strip away the headlines and the hot takes, what’s left is a message that’s far more familiar. The problems haven’t fundamentally changed. We have the same story with different tools.

It’s a warning not to let hype dictate strategy. Fear-driven decisions lead to bloated toolchains, runaway costs, and fragile dependencies on capabilities you don’t control. “Rented cognition” may feel powerful today, but it comes with long-term tradeoffs that must be understood and managed.

We have an opportunity to double down on what works. Strong fundamentals such as risk-based vulnerability management, disciplined patching, secure development practices, detection and response, and thoughtful automation are not made obsolete by AI. If anything, they become more important. Faster attackers simply compress timelines. They don’t rewrite the rules.